- UX for AI

- Posts

- Should the Agentic SOAR Playbook Pull the Trigger? The Math Is Simpler Than You Think

Should the Agentic SOAR Playbook Pull the Trigger? The Math Is Simpler Than You Think

OpenClaw Agentic AI attacks are increasing. Yet most SOC teams are keeping the human-in-the-loop action model. In today's AI world, they aren’t being “safe” — they’re choosing slower, more expensive failures.

As I previously wrote on LinkedIn from RSAC 2026, OpenClaw Agentic AI attacks are increasing. Yet most SOC teams are keeping the human-in-the-loop action model. In today's AI world, they aren’t being “safe” — they’re choosing slower, more expensive failures.

Every SOAR implementation eventually hits the same wall.

The playbook fires. The recommended action is right there: isolate the workload, block the IP, quarantine the endpoint. The AI is confident. The evidence is solid. And yet — someone built in an approval gate, because nobody wanted to be responsible for the time the automation took down production.

So the alert sits. The analyst is in a meeting. The attacker is not.

This is the human-in-the-loop problem. And most vendors treat it as a philosophical question: how much do you trust your AI? I'm going to argue it's not philosophical at all.

It's math.

"Math can be many things. It can be Art, but it cannot lie.*" — The Life of Chuck

(*Well, truthfully, humans have been using math to lie since time immemorial, but in this case, it is still our best guide to autonomous Agentic SOAR decision-making -- let me show you why.)

A simple decision framework (Value Matrix)

Before I demonstrate how to apply math to SOAR, let me give you the foundation — an analysis tool called the Value Matrix, originally developed by Arijit Sengupta.

Here's the short version for security practitioners.

Almost every AI decision produces one of four outcomes — the classic Confusion Matrix: True Positive, False Positive, True Negative, False Negative. Data scientists obsess over Accuracy and Precision. The Value Matrix makes one critical addition: it assigns a dollar value to each cell.

DIAGRAM: Standard 2x2 Value Matrix — TP/FP/TN/FN

The ROI/Risk math clarifies the problem significantly. Because once you're thinking in costs and benefits instead of accuracy percentages, you stop asking "is the AI right?" and start asking the only question that matters: "What is the cost vs. benefit of each decision?"

Applied to security automation, this becomes the entire ballgame.

Mapping This to Real SOAR Playbook Decisions

For SOAR, the outcomes are straightforward:

True Positive → threat stopped, damage avoided

False Positive → disruption caused

True Negative → nothing breaks

False Negative → attacker continues

The decision is simple:

Is acting now cheaper than waiting?

A Practical Framework for Autonomous Action

Every autonomous decision comes down to four factors:

Severity — how bad this gets if it’s real

Time Sensitivity — how fast it gets worse

Blast Radius — what breaks if you act and you’re wrong

Reversibility — how quickly you can undo the action

In practice, these collapse into decision bands:

Act automatically

Act with lightweight review

Require human approval

Never automate

You can assign weights. You can model it.

But:

This is not a scientific constant. It is a configurable operational heuristic.

The goal is not perfect math.

The goal is consistent, explainable decisions under pressure.

Three Examples That Show the Math in Action

Example 1: Act Now — Even Though It Looks Scary

Scenario: Active lateral movement detected.

Attacker has compromised a cloud workload and is pivoting toward the domain controller. SOAR recommends: isolate the compromised workload immediately.

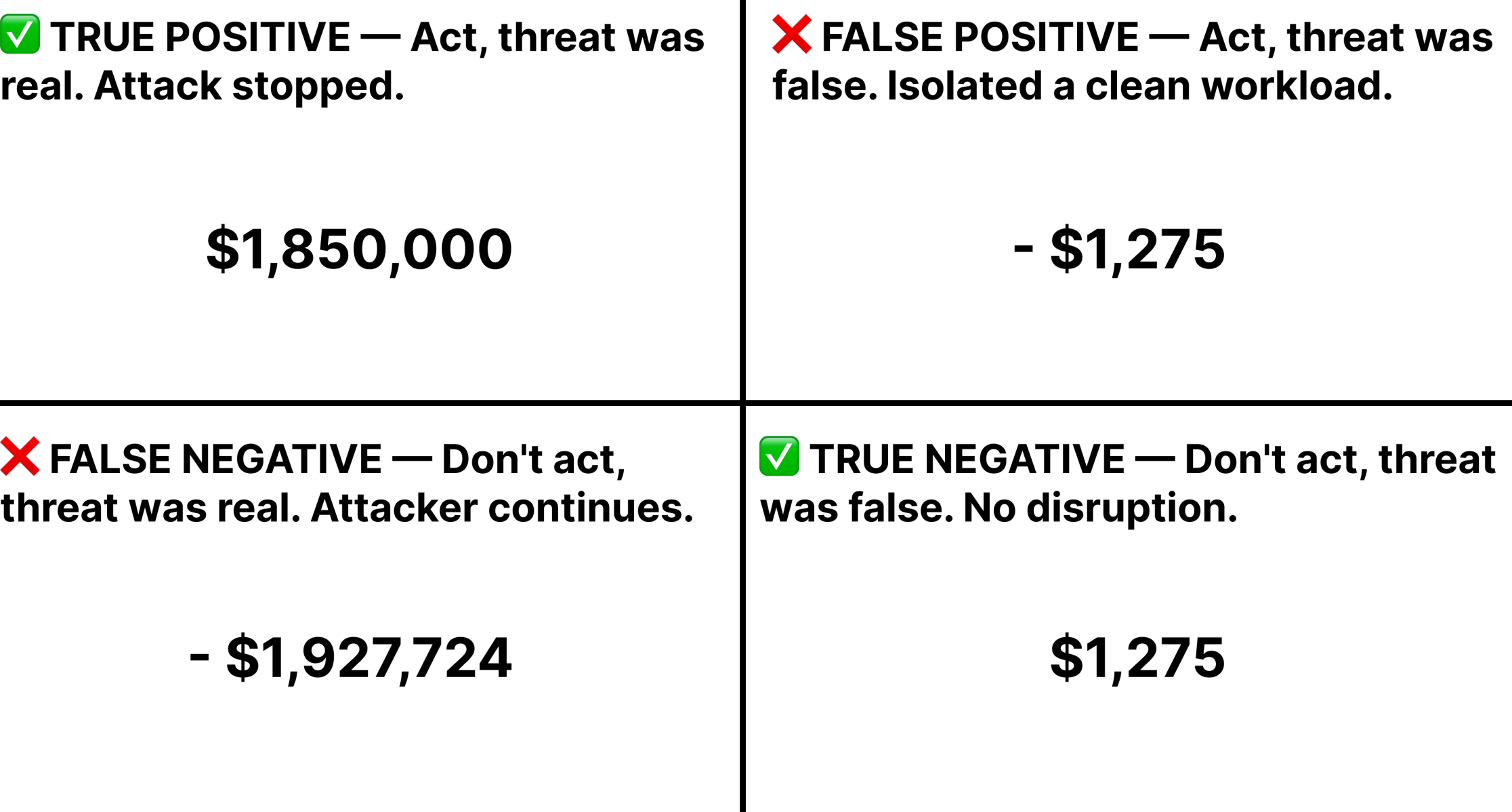

DIAGRAM: Value Matrix Example 1 — high TP value (attack stopped), low FP cost (workload isolation reversible in minutes), high FN cost (domain controller compromise, ransomware), low TN (no action needed here). → Act autonomously.

Here's the detailed math breakdown (see References section for sources and calculations):

✅ TRUE POSITIVE — Attack stopped

Ransom avoided: $1,000,000

Recovery avoided: $530,000

Downtime avoided: $247,000

Legal/IR avoided: $73,000 TP Value: $1,850,000

❌ FALSE POSITIVE — Clean workload isolated

Productivity loss: $1,200

IT restore: $75 FP Cost: $1,275

❌ FALSE NEGATIVE — Attack continues

Full breach cost: $1,850,000

Delay impact: $77,700 FN Cost: $1,927,724

Decision

Cost of acting wrong: ~$1K

Cost of waiting: ~$1.9M

Reversibility: high

Blast radius: low

👉 Act now. Ask forgiveness never.

The $77,700 approval delay cost is derived from Huntress 2025 data: fastest RaaS groups (Akira, RansomHub) achieve time-to-ransom in 6 hours. A 15-minute human approval gate consumes 4.2% of that window — during which the attacker is actively pivoting.

The action looks drastic. Isolating a production workload triggers alarms. But the math:

FN cost: Active attacker reaching domain controller = potentially catastrophic, costs compounding every second.

FP cost: Workload isolation = reversible in minutes, brief disruption for one service.

Reversibility: High. You can un-isolate in under 5 minutes.

Waiting 10 minutes for a human approval while an attacker pivots toward your crown jewels is not a conservative choice. It's an expensive one.

Example 2: Hold — Even Though It Looks Urgent

Scenario: Anomalous traffic spike on production database.

Pattern matches potential data exfiltration. SOAR recommends: take the database offline immediately.

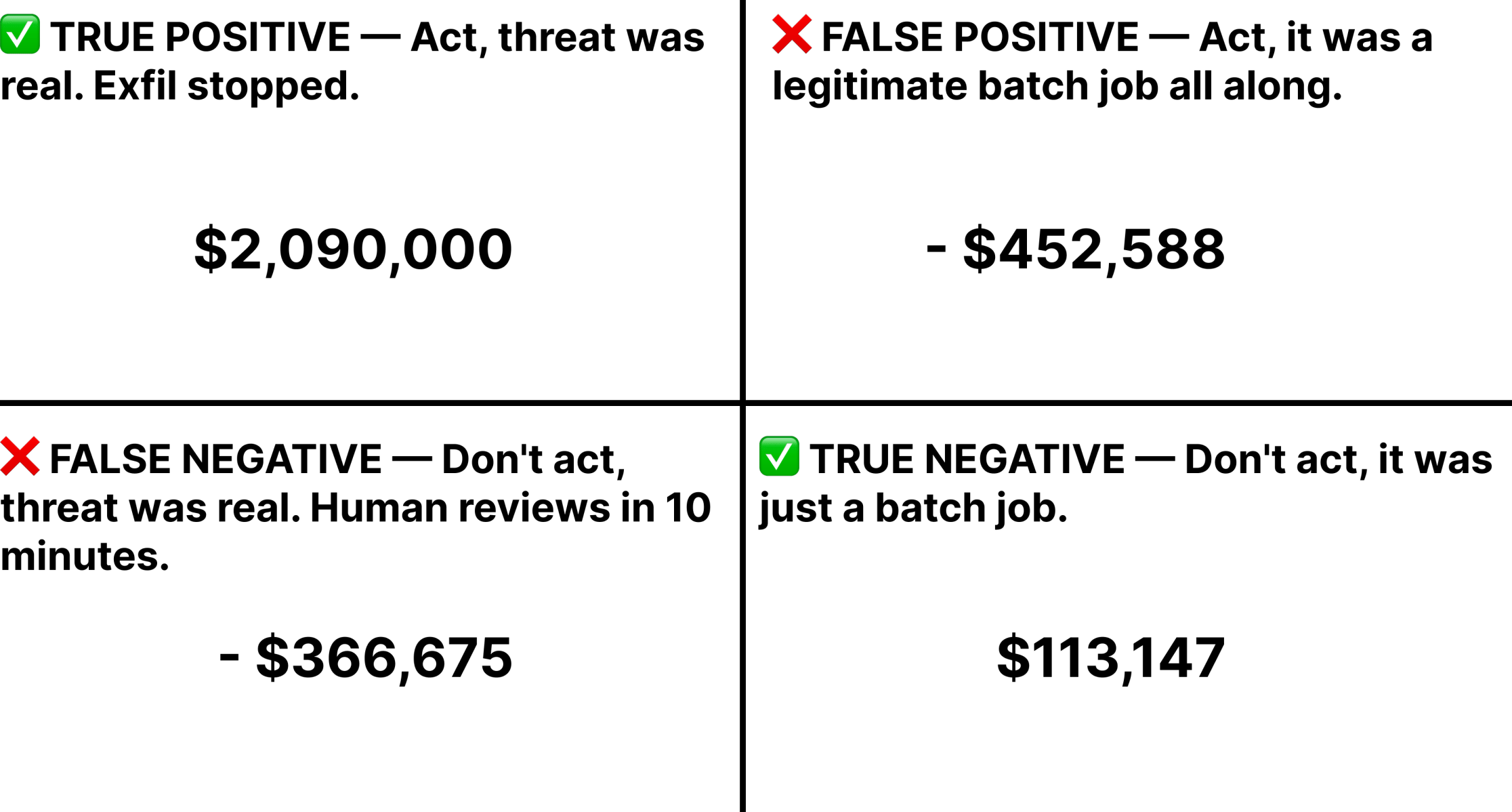

DIAGRAM: Value Matrix Example 2 — moderate TP value (exfil stopped, but data may already be moving), very high FP cost (every customer app goes down for hours), high FN cost (ongoing exfil), BUT high irreversibility of action = multiplies FP cost dramatically. → Human approval gate.

Here's the detailed math breakdown (see References section for sources and calculations):

✅ TRUE POSITIVE — Exfil stopped TP Value: ~$2.09M

❌ FALSE POSITIVE — Legitimate workload disrupted

Revenue loss

Productivity loss

Customer impact

Recovery time FP Cost: ~$452K

❌ FALSE NEGATIVE — Delay during review FN Cost: ~$366K

Decision

Cost of acting wrong: ~$452K

Cost of waiting: ~$366K

Reversibility: low

Blast radius: very high

👉 Require human approval

Note: 60-minute exfil window is a conservative assumption for a detected, active exfil attack on a mid-size DB. Real-world window varies by attacker tooling and connection speed.

This one looks urgent. Possible exfiltration in progress. But the math:

FP cost: Every customer-facing application goes offline. That's a 4-hour recovery, minimum.

Reversibility: Low. You can't un-ring that bell for hours.

FN cost: Data may already be moving — but waiting 3 minutes for a human approval costs almost nothing compared to a 4-hour outage triggered by a spike that turned out to be a legitimate batch job.

Here, the human approval gate makes sense -- the 3-minute delay is worth it. The irreversibility multiplier is what makes this different from Example 1. Same urgency signal, opposite answer.

Example 3: Act — Even Though It Looks Dangerous

Scenario: Phishing link clicked by one user.

Command-and-control callback detected. Active C2 channel established. SOAR recommends: block outbound IP immediately.

DIAGRAM: Value Matrix Example 3 — high TP value (C2 channel severed before lateral movement), low FP cost (one user, one session blocked), very high FN cost (active C2 channel = lateral movement clock is running), high reversibility (IP block removed instantly if wrong). → Act autonomously.

Here's the detailed math breakdown (see References section for sources and calculations):

✅ TRUE POSITIVE — Breach prevented TP Value: ~$2.09M

❌ FALSE POSITIVE — Legitimate traffic blocked FP Cost: ~$39

❌ FALSE NEGATIVE — C2 remains active FN Cost: ~$913K

Decision

Cost of acting wrong: negligible

Cost of waiting: massive

Reversibility: immediate

Blast radius: minimal

👉 Act automatically

Note: CrowdStrike 2025 data shows fastest attacker breakout = 51 seconds. If this attacker is faster than average, the $913K figure understates the FN cost dramatically.

The action sounds aggressive — blocking outbound traffic. But the math:

FP cost: One user's one session is disrupted. They might notice a connectivity blip.

Reversibility: Extremely high. You can whitelist the IP in seconds if you were wrong.

FN cost: Active C2 channel with an established beachhead is lateral movement waiting to happen. Every minute of delay is opportunity.

Block first. Whitelist later if needed. The combination of high reversibility and high FN cost makes this an easy autonomous call — even though "blocking outbound traffic" sounds dangerous in the abstract.

But Wait, It Gets Smarter Over Time

The Playbook Autonomy Score is not a static configuration. The best implementations treat it as a living system — and this is where the gap between current SOAR platforms and what's actually possible becomes most visible.

Every autonomous action the playbook takes is a data point. Was the workload actually compromised? Did the blocked IP resolve to a known C2 server? Did the analyst override the decision — and what did they find? Two feedback loops run in parallel: explicit (analysts mark actions correct or incorrect) and implicit (outcome tracking — was the threat real, did the action stop it, was there collateral damage?).

Over time, this creates something genuinely valuable: organizational memory that is specific to your stack. The playbook doesn't just know what the vendor shipped — it knows what worked in your AWS environment, against your traffic baselines, with your crown jewel asset topology.

This is the Onion Model applied to security automation. As I described in my article "Memory Management Done Right: The Onion Model", a well-designed AI memory architecture has three layers: a core identity layer (slow to change), a project layer (accumulates working context), and a conversation layer (real-time). For SOAR, the analog is: default vendor playbook behavior (core of the onion), environment-specific learned thresholds (working context), and the live investigation context (real-time).

The critical design requirement: this memory cannot be a black box.

The learned thresholds, the confidence adjustments, the environmental overrides — all of it must be inspectable and editable by a human at any time. A CISO should be able to ask the system: "Why did you act autonomously on this class of event?" and receive a legible answer. They should be able to modify the behavior directly. They should be able to see exactly what the system has learned — and easily correct the behavior using natural language, in a transparent, reliable, repeatable way.

Opacity is not a feature. It's a liability. And most SOAR vendors are shipping systems that learn silently, adjust quietly, and offer no window into what they've decided. That's not AI-assisted security. That's a HAL 9000 — confidently wrong, dangerously autonomous, impossible to debug, and deadly dangerous to the mission and crew.

The vendors who build inspectable, mutable, layered organizational memory into their playbook systems will win. The ones who treat "learns over time" as a marketing bullet point without the inspection layer will eventually cause an incident they can't explain — and lose the account.

What This Means for Product Teams

Most playbook libraries are built around what's technically possible: here are 1,000 actions we can take. In the world where OpenClaw AI Agents perform successful attacks autonomously in just 27 minutes, we should be building SOAR around the Value Matrix: here are the default costs and benefits of each action, here is how you configure your thresholds, and here is exactly what the system has learned from your environment.

Every playbook ships with default parameters — severity weights, blast radius classification, reversibility ratings. The system applies the Value Matrix math on every decision. The memory layer updates the weights over time. Humans inspect and override at any point.

This isn't a vision. It's a product spec. It's the missing layer in every SOAR platform I've seen.

I know because I helped build one. The SOAR integration at Sumo Logic — two years of customer research, redesign, and organizational alignment. The automation wasn't the hard part -- it was always the autonomous judgment layer. And with the Value Matrix framework, the judgment can be math that you can actually defend.

References

Methodology

Mid-size business parameters I'm using:

500 employees, ~$75M annual revenue

Security analyst: $100K/year = $48/hour

IT engineer (fully loaded): ~$75/hour

Based on: Sophos 2025, Mandiant M-Trends 2025, Huntress 2025 Threat Report, IBM Cost of Data Breach 2025

Key figures mapped to sources:

$1,000,000 median ransom → Sophos 2025

$1,530,000 average recovery cost → Sophos 2025 / Total Assure 2026

24-day average downtime → Acronis 2025 / PurpleSec 2025

6-hour time-to-ransom (fastest RaaS groups) → Huntress 2025

11-day median dwell time → Mandiant M-Trends 2025

$5.08M average total ransomware cost (enterprise) → IBM 2025 (used as ceiling sanity check, not in mid-size calc)

Example 1

Acronis. (2025). The cost of ransomware: Why every business pays, one way or another. https://www.acronis.com/en/blog/posts/cost-of-ransomware/

DeepStrike. (2025). Ransomware statistics 2025: Attack rates and costs. https://deepstrike.io/blog/ransomware-statistics-2025

Google Threat Intelligence Group / Mandiant. (2025). M-Trends 2025 report. https://services.google.com/fh/files/misc/m-trends-2025-en.pdf

Huntress. (2025). Hackers ramp up efficiency, speed, and scale in 2024. https://www.huntress.com/press-release/hackers-ramp-up-efficiency-speed-and-scale-in-2024-targeting-business-of-all-sizes

IBM Security. (2025). Cost of a data breach report 2025. https://www.ibm.com/reports/data-breach

PurpleSec. (2025). The average cost of ransomware attacks (updated 2025). https://purplesec.us/learn/average-cost-of-ransomware-attacks/

Sophos. (2025). The state of ransomware 2025. https://www.sophos.com/en-us/content/state-of-ransomware

Total Assure. (2026, February 4). Average cost of a ransomware attack in 2025. https://www.totalassure.com/blog/average-cost-ransomware-attack-2025

Example 2

BlackFog. (2025, July 25). Data exfiltration extortion now averages $5.21 million according to IBM's report. https://www.blackfog.com/data-exfiltration-extortion-5m/

Erwood Group. (2025, June 16). The true costs of downtime in 2025: A deep dive by business size and industry. https://www.erwoodgroup.com/blog/the-true-costs-of-downtime-in-2025-a-deep-dive-by-business-size-and-industry/

IBM Security. (2025). Cost of a data breach report 2025. https://www.ibm.com/reports/data-breach

ITIC. (2024). 2024 hourly cost of downtime report. https://itic-corp.com/itic-2024-hourly-cost-of-downtime-report/

N-able. (2025, November 20). The true cost of downtime: Why cyber resilience matters. https://www.n-able.com/blog/true-cost-of-downtime

Example 3

CrowdStrike. (2025). 2025 global threat report: Adversary breakout times. https://www.crowdstrike.com/en-us/global-threat-report/

DeepStrike. (2025). Phishing statistics 2025: Costs, AI attacks & top targets. https://deepstrike.io/blog/Phishing-Statistics-2025

ExpressVPN / Cybersecurity Research. (2025, December 12). Cyberattack costs in 2025: Statistics, trends, and real examples. https://www.expressvpn.com/blog/the-true-cost-of-cyber-attacks-in-2024-and-beyond/

Secureframe. (2025). 60+ phishing attack statistics: Insights for 2026. https://secureframe.com/blog/phishing-attack-statistics

Total Assure. (2026, February). Average cost of a phishing attack in 2025. https://www.totalassure.com/blog/average-cost-phishing-attack-2025

Note: All prior IBM Security and Sophos references from Examples 1 and 2 still apply for breach cost baseline.

Value Matrix

The ideas in this article are based in large part on the public presentations and articles of Arijit Sengupta and his team:

Gartner Data and Analytics Summit Showcase March 21, 2019: https://youtu.be/XA2FhDo3hm4

Arijit Sengupta, et. al., Take the AI Challenge, https://aible.com (collected May 8th, 2019)

Reply